Attention Is All You Need for Chinese Word Segmentation

Sufeng Duan, Hai Zhao

Phonology, Morphology and Word Segmentation Long Paper

You can open the pre-recorded video in a separate window.

Abstract:

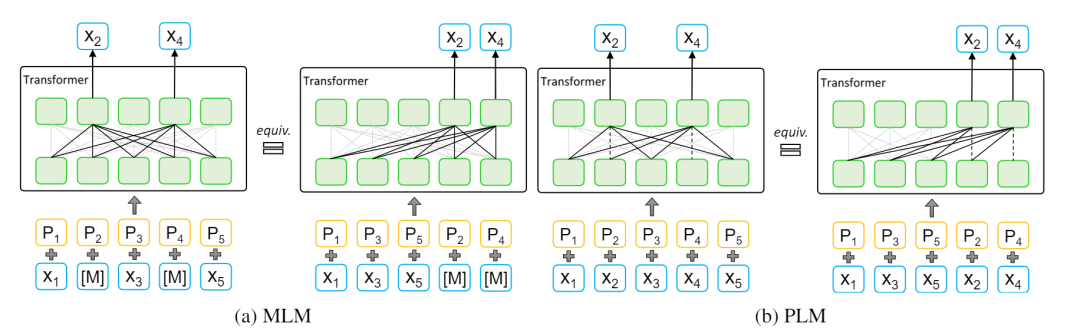

Taking greedy decoding algorithm as it should be, this work focuses on further strengthening the model itself for Chinese word segmentation (CWS), which results in an even more fast and more accurate CWS model. Our model consists of an attention only stacked encoder and a light enough decoder for the greedy segmentation plus two highway connections for smoother training, in which the encoder is composed of a newly proposed Transformer variant, Gaussian-masked Directional (GD) Transformer, and a biaffine attention scorer. With the effective encoder design, our model only needs to take unigram features for scoring. Our model is evaluated on SIGHAN Bakeoff benchmark datasets. The experimental results show that with the highest segmentation speed, the proposed model achieves new state-of-the-art or comparable performance against strong baselines in terms of strict closed test setting.

NOTE: Video may display a random order of authors.

Correct author list is at the top of this page.

Connected Papers in EMNLP2020

Similar Papers

A Joint Multiple Criteria Model in Transfer Learning for Cross-domain Chinese Word Segmentation

Kaiyu Huang, Degen Huang, Zhuang Liu, Fengran Mo,

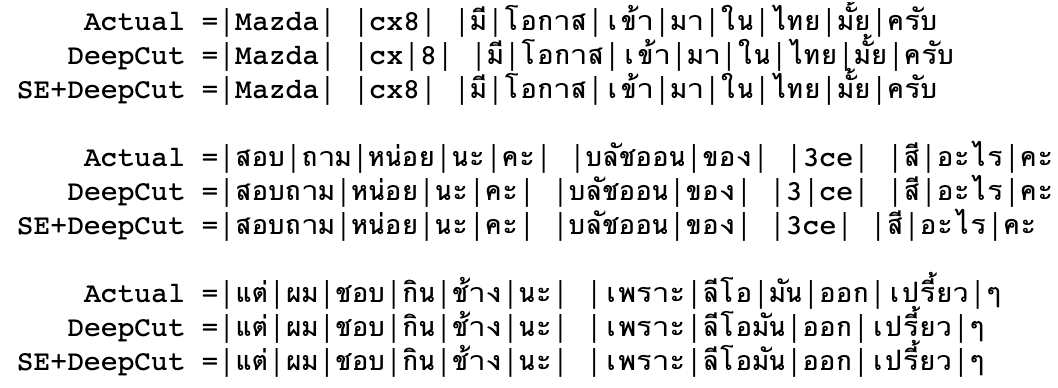

Domain Adaptation of Thai Word Segmentation Models using Stacked Ensemble

Peerat Limkonchotiwat, Wannaphong Phatthiyaphaibun, Raheem Sarwar, Ekapol Chuangsuwanich, Sarana Nutanong,

Losing Heads in the Lottery: Pruning Transformer Attention in Neural Machine Translation

Maximiliana Behnke, Kenneth Heafield,

Modeling Content Importance for Summarization with Pre-trained Language Models

Liqiang Xiao, Lu Wang, Hao He, Yaohui Jin,