F2-Softmax: Diversifying Neural Text Generation via Frequency Factorized Softmax

Byung-Ju Choi, Jimin Hong, David Park, Sang Wan Lee

Language Generation Long Paper

You can open the pre-recorded video in a separate window.

Abstract:

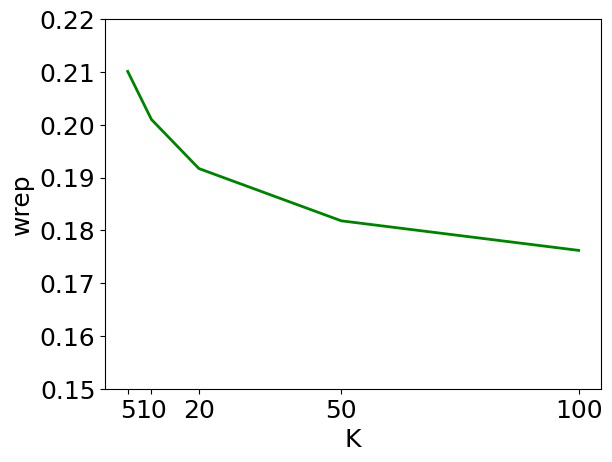

Despite recent advances in neural text generation, encoding the rich diversity in human language remains elusive. We argue that the sub-optimal text generation is mainly attributable to the imbalanced token distribution, which particularly misdirects the learning model when trained with the maximum-likelihood objective. As a simple yet effective remedy, we propose two novel methods, F^2-Softmax and MefMax, for a balanced training even with the skewed frequency distribution. MefMax assigns tokens uniquely to frequency classes, trying to group tokens with similar frequencies and equalize frequency mass between the classes. F^2-Softmax then decomposes a probability distribution of the target token into a product of two conditional probabilities of (1) frequency class, and (2) token from the target frequency class. Models learn more uniform probability distributions because they are confined to subsets of vocabularies. Significant performance gains on seven relevant metrics suggest the supremacy of our approach in improving not only the diversity but also the quality of generated texts.

NOTE: Video may display a random order of authors.

Correct author list is at the top of this page.

Connected Papers in EMNLP2020

Similar Papers

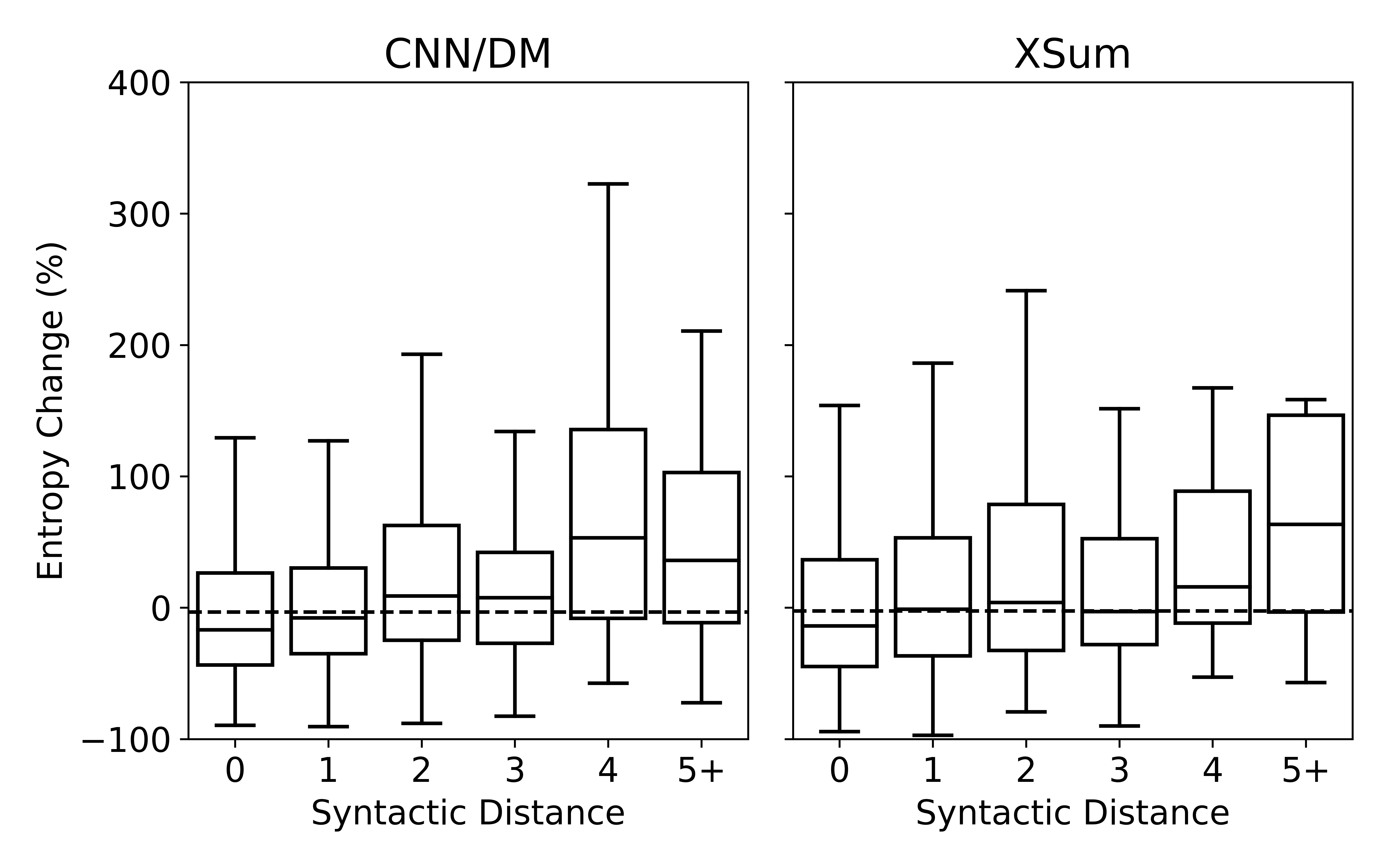

Understanding Neural Abstractive Summarization Models via Uncertainty

Jiacheng Xu, Shrey Desai, Greg Durrett,

Q-learning with Language Model for Edit-based Unsupervised Summarization

Ryosuke Kohita, Akifumi Wachi, Yang Zhao, Ryuki Tachibana,