Friendly Topic Assistant for Transformer Based Abstractive Summarization

Zhengjue Wang, Zhibin Duan, Hao Zhang, Chaojie Wang, Long Tian, Bo Chen, Mingyuan Zhou

Summarization Long Paper

You can open the pre-recorded video in a separate window.

Abstract:

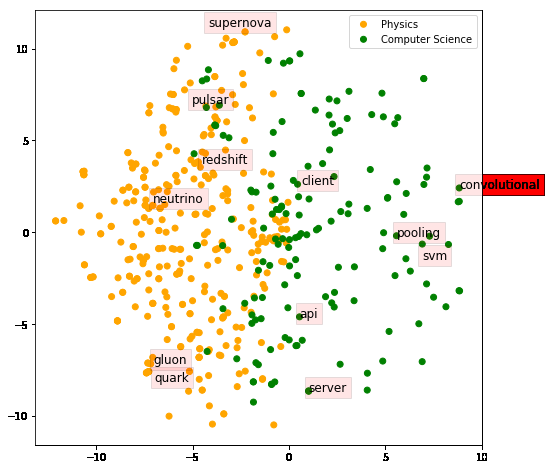

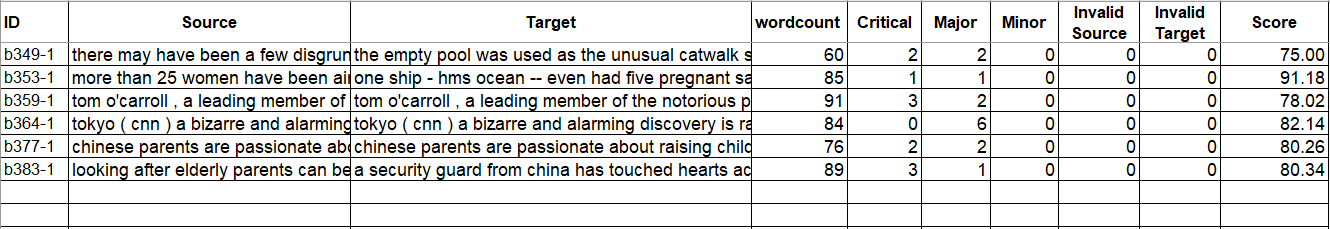

Abstractive document summarization is a comprehensive task including document understanding and summary generation, in which area Transformer-based models have achieved the state-of-the-art performance. Compared with Transformers, topic models are better at learning explicit document semantics, and hence could be integrated into Transformers to further boost their performance. To this end, we rearrange and explore the semantics learned by a topic model, and then propose a topic assistant (TA) including three modules. TA is compatible with various Transformer-based models and user-friendly since i) TA is a plug-and-play model that does not break any structure of the original Transformer network, making users easily fine-tune Transformer+TA based on a well pre-trained model; ii) TA only introduces a small number of extra parameters. Experimental results on three datasets demonstrate that TA is able to improve the performance of several Transformer-based models.

NOTE: Video may display a random order of authors.

Correct author list is at the top of this page.

Connected Papers in EMNLP2020

Similar Papers

Q-learning with Language Model for Edit-based Unsupervised Summarization

Ryosuke Kohita, Akifumi Wachi, Yang Zhao, Ryuki Tachibana,

On Extractive and Abstractive Neural Document Summarization with Transformer Language Models

Jonathan Pilault, Raymond Li, Sandeep Subramanian, Chris Pal,

What Have We Achieved on Text Summarization?

Dandan Huang, Leyang Cui, Sen Yang, Guangsheng Bao, Kun Wang, Jun Xie, Yue Zhang,

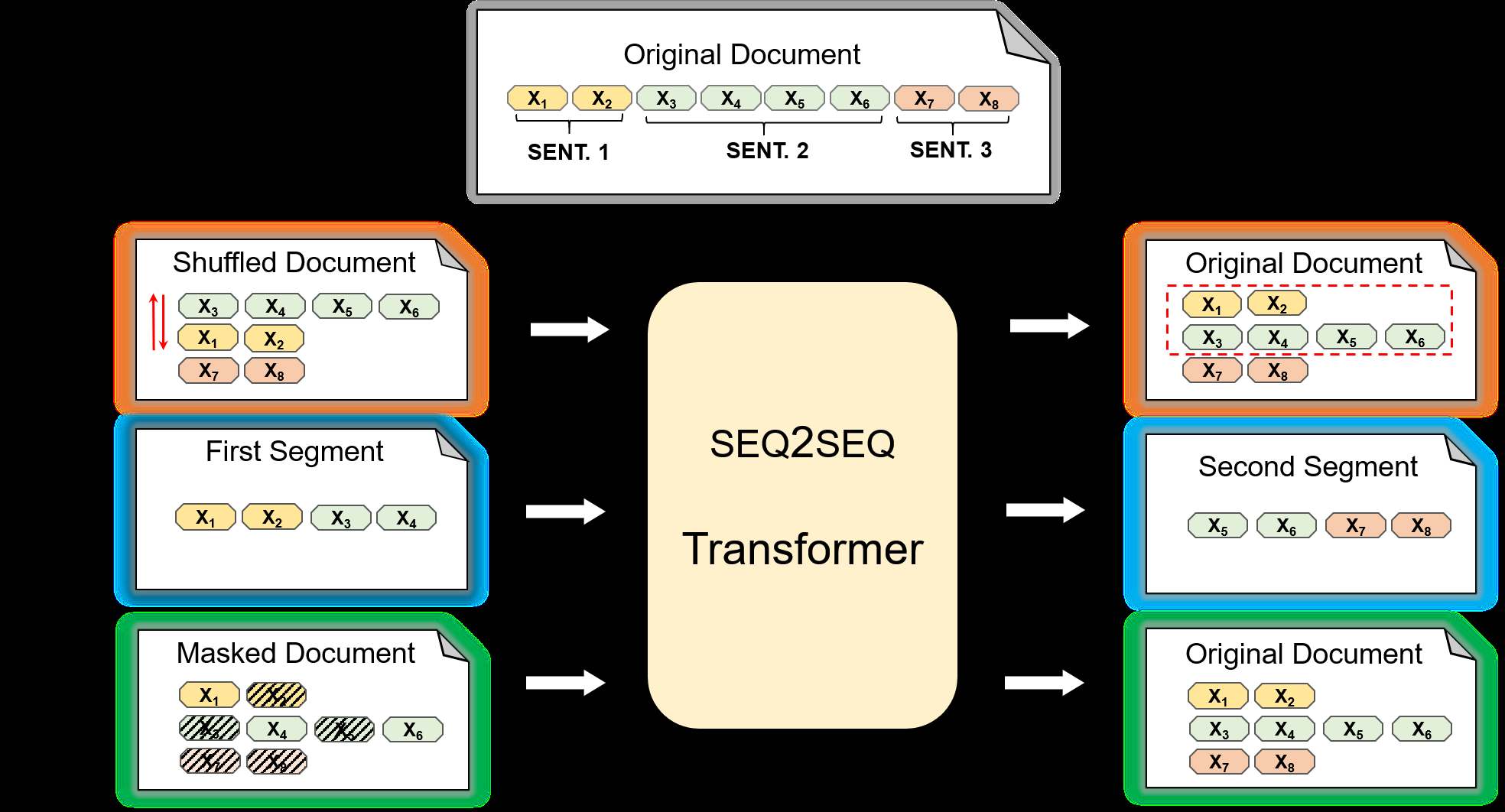

Pre-training for Abstractive Document Summarization by Reinstating Source Text

Yanyan Zou, Xingxing Zhang, Wei Lu, Furu Wei, Ming Zhou,