Short Text Topic Modeling with Topic Distribution Quantization and Negative Sampling Decoder

Xiaobao Wu, Chunping Li, Yan Zhu, Yishu Miao

Information Retrieval and Text Mining Long Paper

Abstract:

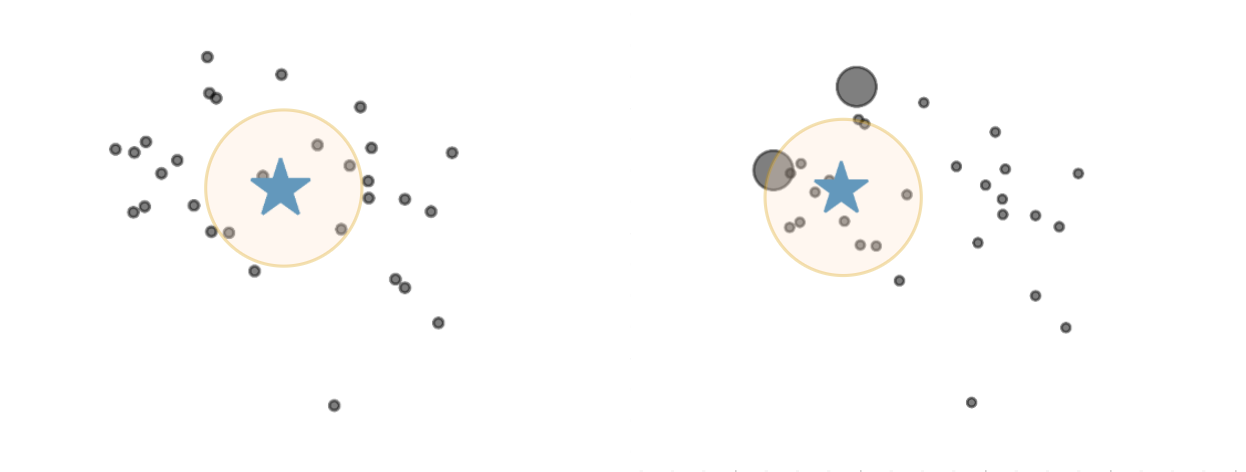

Topic models have been prevailing for many years on discovering latent semantics while modeling long documents. However, for short texts they generally suffer from data sparsity because of extremely limited word co-occurrences; thus tend to yield repetitive or trivial topics with low quality. In this paper, to address this issue, we propose a novel neural topic model in the framework of autoencoding with a new topic distribution quantization approach generating peakier distributions that are more appropriate for modeling short texts. Besides the encoding, to tackle this issue in terms of decoding, we further propose a novel negative sampling decoder learning from negative samples to avoid yielding repetitive topics. We observe that our model can highly improve short text topic modeling performance. Through extensive experiments on real-world datasets, we demonstrate our model can outperform both strong traditional and neural baselines under extreme data sparsity scenes, producing high-quality topics.

Connected Papers in EMNLP2020

Similar Papers

Neural Topic Modeling with Cycle-Consistent Adversarial Training

Xuemeng Hu, Rui Wang, Deyu Zhou, Yuxuan Xiong,

Tired of Topic Models? Clusters of Pretrained Word Embeddings Make for Fast and Good Topics too!

Suzanna Sia, Ayush Dalmia, Sabrina J. Mielke,

A Neural Generative Model for Joint Learning Topics and Topic-Specific Word Embeddings

Lixing Zhu, Deyu Zhou, Yulan He,