Look at the First Sentence: Position Bias in Question Answering

Miyoung Ko, Jinhyuk Lee, Hyunjae Kim, Gangwoo Kim, Jaewoo Kang

Question Answering Long Paper

You can open the pre-recorded video in a separate window.

Abstract:

Many extractive question answering models are trained to predict start and end positions of answers. The choice of predicting answers as positions is mainly due to its simplicity and effectiveness. In this study, we hypothesize that when the distribution of the answer positions is highly skewed in the training set (e.g., answers lie only in the k-th sentence of each passage), QA models predicting answers as positions can learn spurious positional cues and fail to give answers in different positions. We first illustrate this position bias in popular extractive QA models such as BiDAF and BERT and thoroughly examine how position bias propagates through each layer of BERT. To safely deliver position information without position bias, we train models with various de-biasing methods including entropy regularization and bias ensembling. Among them, we found that using the prior distribution of answer positions as a bias model is very effective at reducing position bias, recovering the performance of BERT from 37.48% to 81.64% when trained on a biased SQuAD dataset.

NOTE: Video may display a random order of authors.

Correct author list is at the top of this page.

Connected Papers in EMNLP2020

Similar Papers

Don't Read Too Much Into It: Adaptive Computation for Open-Domain Question Answering

Yuxiang Wu, Sebastian Riedel, Pasquale Minervini, Pontus Stenetorp,

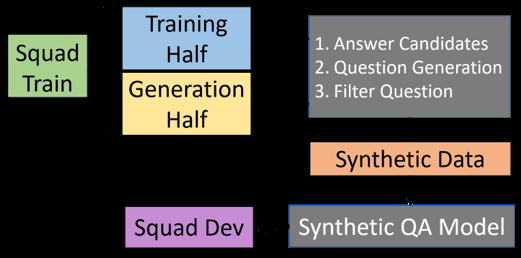

Training Question Answering Models From Synthetic Data

Raul Puri, Ryan Spring, Mohammad Shoeybi, Mostofa Patwary, Bryan Catanzaro,

F1 is Not Enough! Models and Evaluation Towards User-Centered Explainable Question Answering

Hendrik Schuff, Heike Adel, Ngoc Thang Vu,

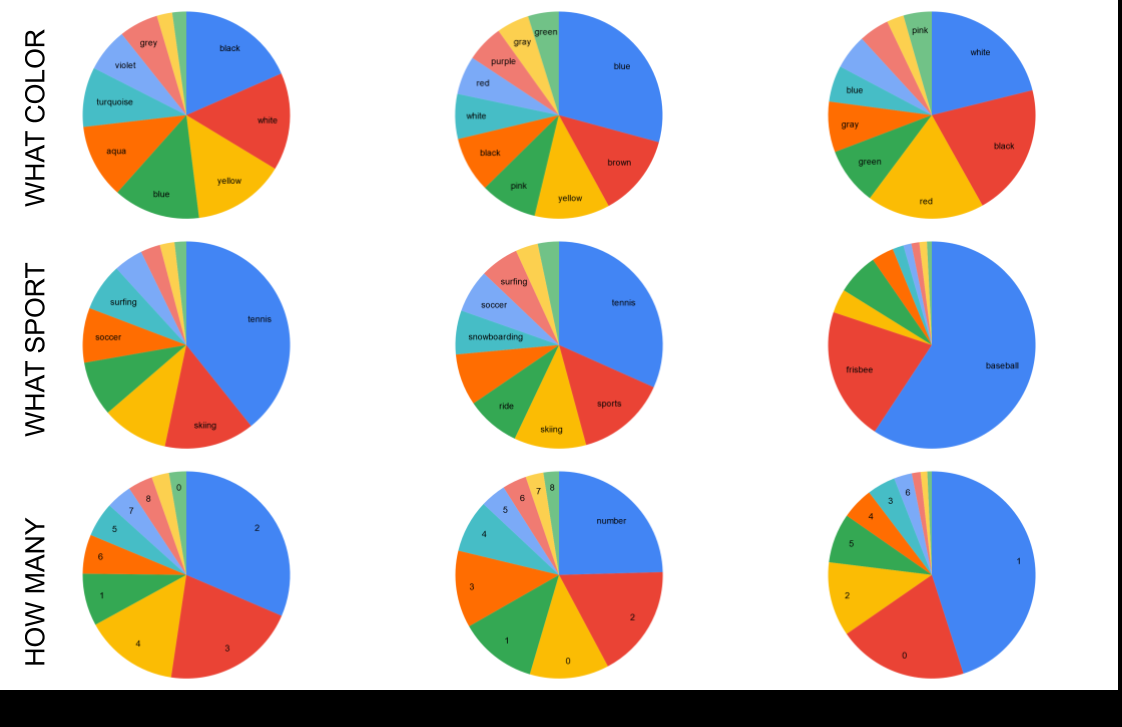

MUTANT: A Training Paradigm for Out-of-Distribution Generalization in Visual Question Answering

Tejas Gokhale, Pratyay Banerjee, Chitta Baral, Yezhou Yang,