An Unsupervised Sentence Embedding Method by Mutual Information Maximization

Yan Zhang, Ruidan He, Zuozhu Liu, Kwan Hui Lim, Lidong Bing

Semantics: Sentence-level Semantics, Textual Inference and Other areas Long Paper

Abstract:

BERT is inefficient for sentence-pair tasks such as clustering or semantic search as it needs to evaluate combinatorially many sentence pairs which is very time-consuming. Sentence BERT (SBERT) attempted to solve this challenge by learning semantically meaningful representations of single sentences, such that similarity comparison can be easily accessed. However, SBERT is trained on corpus with high-quality labeled sentence pairs, which limits its application to tasks where labeled data is extremely scarce. In this paper, we propose a lightweight extension on top of BERT and a novel self-supervised learning objective based on mutual information maximization strategies to derive meaningful sentence embeddings in an unsupervised manner. Unlike SBERT, our method is not restricted by the availability of labeled data, such that it can be applied on different domain-specific corpus. Experimental results show that the proposed method significantly outperforms other unsupervised sentence embedding baselines on common semantic textual similarity (STS) tasks and downstream supervised tasks. It also outperforms SBERT in a setting where in-domain labeled data is not available, and achieves performance competitive with supervised methods on various tasks.

Connected Papers in EMNLP2020

Similar Papers

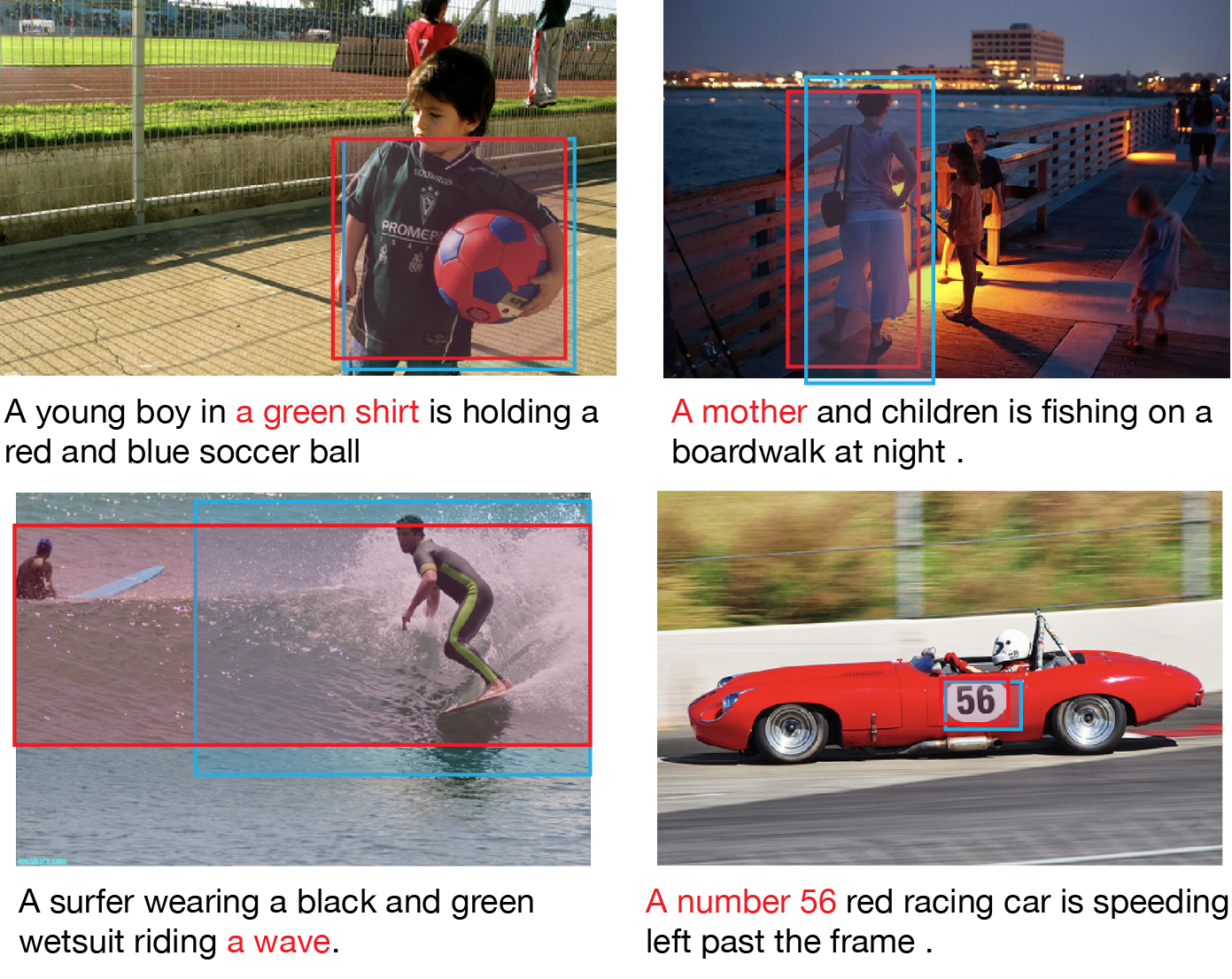

MAF: Multimodal Alignment Framework for Weakly-Supervised Phrase Grounding

Qinxin Wang, Hao Tan, Sheng Shen, Michael Mahoney, Zhewei Yao,

Q-learning with Language Model for Edit-based Unsupervised Summarization

Ryosuke Kohita, Akifumi Wachi, Yang Zhao, Ryuki Tachibana,

Semi-Supervised Bilingual Lexicon Induction with Two-way Interaction

Xu Zhao, Zihao Wang, Hao Wu, Yong Zhang,

Semantic Label Smoothing for Sequence to Sequence Problems

Michal Lukasik, Himanshu Jain, Aditya Menon, Seungyeon Kim, Srinadh Bhojanapalli, Felix Yu, Sanjiv Kumar,