T3: Tree-Autoencoder Constrained Adversarial Text Generation for Targeted Attack

Boxin Wang, Hengzhi Pei, Boyuan Pan, Qian Chen, Shuohang Wang, Bo Li

Machine Learning for NLP Long Paper

You can open the pre-recorded video in a separate window.

Abstract:

Adversarial attacks against natural language processing systems, which perform seemingly innocuous modifications to inputs, can induce arbitrary mistakes to the target models. Though raised great concerns, such adversarial attacks can be leveraged to estimate the robustness of NLP models. Compared with the adversarial example generation in continuous data domain (e.g., image), generating adversarial text that preserves the original meaning is challenging since the text space is discrete and non-differentiable. To handle these challenges, we propose a target-controllable adversarial attack framework T3, which is applicable to a range of NLP tasks. In particular, we propose a tree-based autoencoder to embed the discrete text data into a continuous representation space, upon which we optimize the adversarial perturbation. A novel tree-based decoder is then applied to regularize the syntactic correctness of the generated text and manipulate it on either sentence (T3(Sent)) or word (T3(Word)) level. We consider two most representative NLP tasks: sentiment analysis and question answering (QA). Extensive experimental results and human studies show that T3 generated adversarial texts can successfully manipulate the NLP models to output the targeted incorrect answer without misleading the human. Moreover, we show that the generated adversarial texts have high transferability which enables the black-box attacks in practice. Our work sheds light on an effective and general way to examine the robustness of NLP models. Our code is publicly available at https://github.com/AI-secure/T3/.

NOTE: Video may display a random order of authors.

Correct author list is at the top of this page.

Connected Papers in EMNLP2020

Similar Papers

Adversarial Attack and Defense of Structured Prediction Models

Wenjuan Han, Liwen Zhang, Yong Jiang, Kewei Tu,

BERT-ATTACK: Adversarial Attack Against BERT Using BERT

Linyang Li, Ruotian Ma, Qipeng Guo, Xiangyang Xue, Xipeng Qiu,

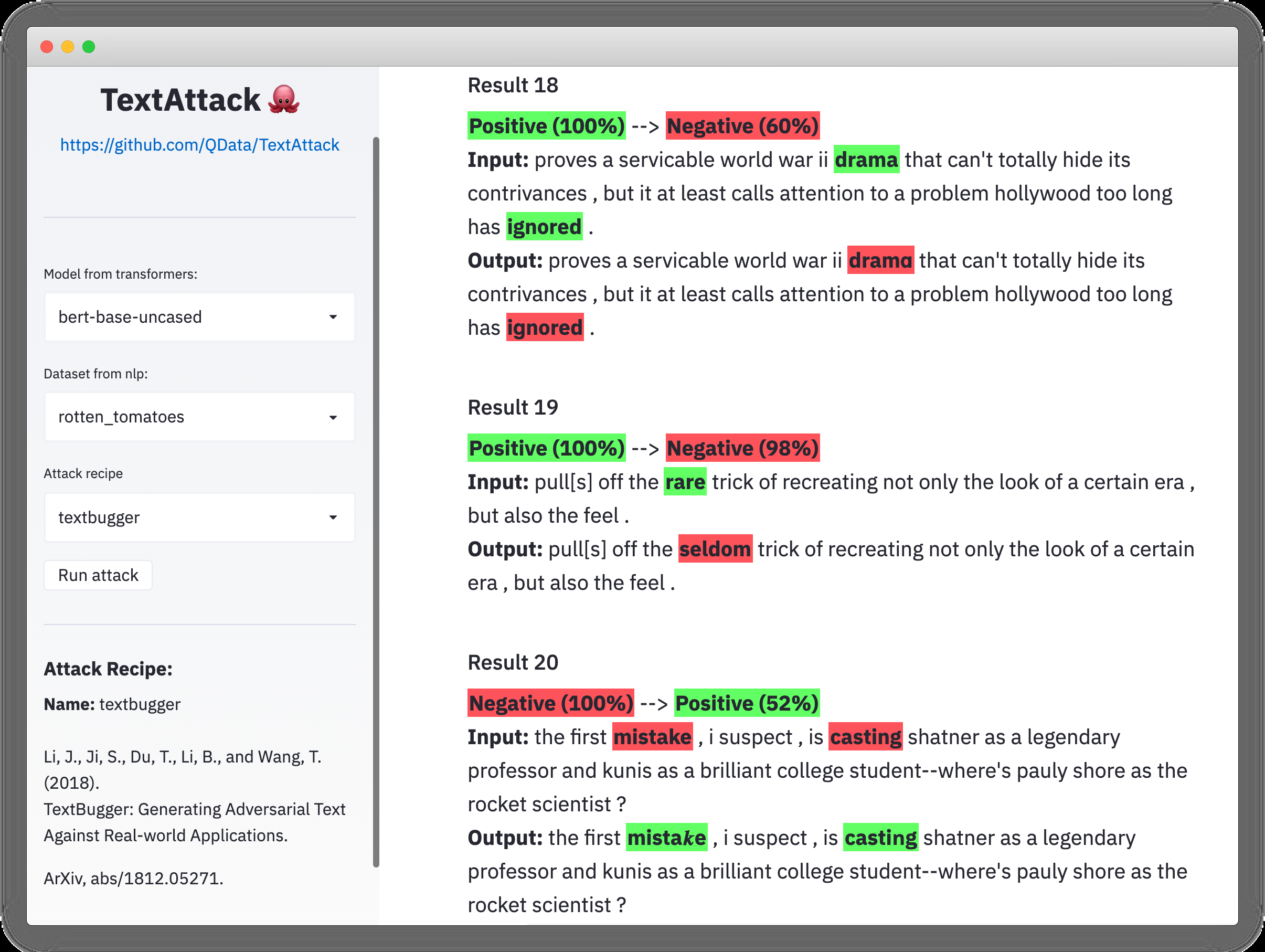

TextAttack: A Framework for Adversarial Attacks, Data Augmentation, and Adversarial Training in NLP

John Morris, Eli Lifland, Jin Yong Yoo, Jake Grigsby, Di Jin, Yanjun Qi,

Adversarial Self-Supervised Data-Free Distillation for Text Classification

Xinyin Ma, Yongliang Shen, Gongfan Fang, Chen Chen, Chenghao Jia, Weiming Lu,