Reading Between the Lines: Exploring Infilling in Visual Narratives

Khyathi Raghavi Chandu, Ruo-Ping Dong, Alan W Black

Language Generation Short Paper

You can open the pre-recorded video in a separate window.

Abstract:

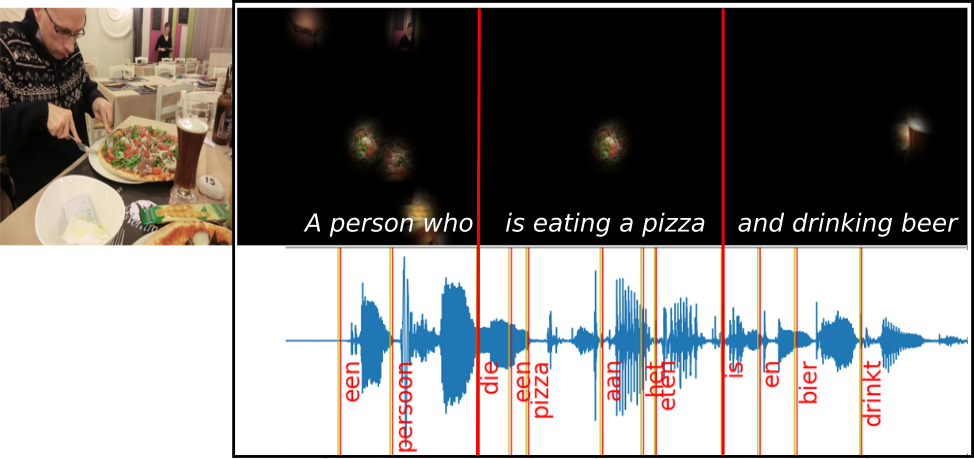

Generating long form narratives such as stories and procedures from multiple modalities has been a long standing dream for artificial intelligence. In this regard, there is often crucial subtext that is derived from the surrounding contexts. The general seq2seq training methods render the models shorthanded while attempting to bridge the gap between these neighbouring contexts. In this paper, we tackle this problem by using infilling techniques involving prediction of missing steps in a narrative while generating textual descriptions from a sequence of images. We also present a new large scale visual procedure telling (ViPT) dataset with a total of 46,200 procedures and around 340k pairwise images and textual descriptions that is rich in such contextual dependencies. Generating steps using infilling technique demonstrates the effectiveness in visual procedures with more coherent texts. We conclusively show a METEOR score of 27.51 on procedures which is higher than the state-of-the-art on visual storytelling. We also demonstrate the effects of interposing new text with missing images during inference. The code and the dataset will be publicly available at https://visual-narratives.github.io/Visual-Narratives/.

NOTE: Video may display a random order of authors.

Correct author list is at the top of this page.

Connected Papers in EMNLP2020

Similar Papers

STORIUM: A Dataset and Evaluation Platform for Machine-in-the-Loop Story Generation

Nader Akoury, Shufan Wang, Josh Whiting, Stephen Hood, Nanyun Peng, Mohit Iyyer,

Generating Image Descriptions via Sequential Cross-Modal Alignment Guided by Human Gaze

Ece Takmaz, Sandro Pezzelle, Lisa Beinborn, Raquel Fernández,