Shallow-to-Deep Training for Neural Machine Translation

Bei Li, Ziyang Wang, Hui Liu, Yufan Jiang, Quan Du, Tong Xiao, Huizhen Wang, Jingbo Zhu

Machine Translation and Multilinguality Long Paper

You can open the pre-recorded video in a separate window.

Abstract:

Deep encoders have been proven to be effective in improving neural machine translation (NMT) systems, but training an extremely deep encoder is time consuming. Moreover, why deep models help NMT is an open question. In this paper, we investigate the behavior of a well-tuned deep Transformer system. We find that stacking layers is helpful in improving the representation ability of NMT models and adjacent layers perform similarly. This inspires us to develop a shallow-to-deep training method that learns deep models by stacking shallow models. In this way, we successfully train a Transformer system with a 54-layer encoder. Experimental results on WMT’16 English-German and WMT’14 English-French translation tasks show that it is 1:4 faster than training from scratch, and achieves a BLEU score of 30:33 and 43:29 on two tasks. The code is publicly available at https://github.com/libeineu/ SDT-Training.

NOTE: Video may display a random order of authors.

Correct author list is at the top of this page.

Connected Papers in EMNLP2020

Similar Papers

Pronoun-Targeted Fine-tuning for NMT with Hybrid Losses

Prathyusha Jwalapuram, Shafiq Joty, Youlin Shen,

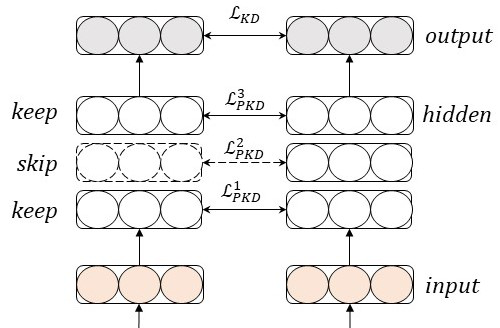

Why Skip If You Can Combine: A Simple Knowledge Distillation Technique for Intermediate Layers

Yimeng Wu, Peyman Passban, Mehdi Rezagholizadeh, Qun Liu,

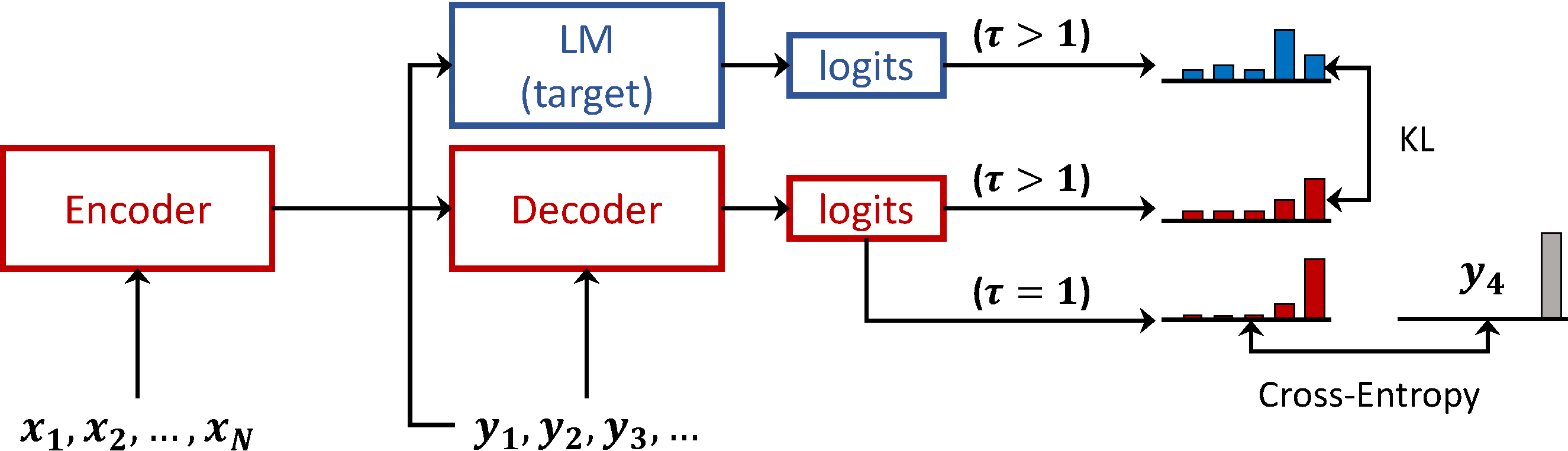

Language Model Prior for Low-Resource Neural Machine Translation

Christos Baziotis, Barry Haddow, Alexandra Birch,

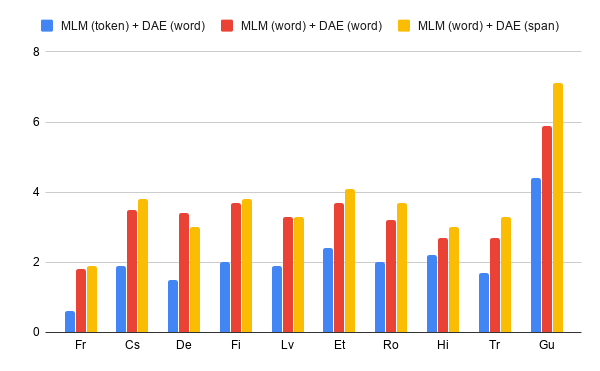

Multi-task Learning for Multilingual Neural Machine Translation

Yiren Wang, ChengXiang Zhai, Hany Hassan,