Pretrained Language Model Embryology: The Birth of ALBERT

Cheng-Han Chiang, Sung-Feng Huang, Hung-yi Lee

Interpretability and Analysis of Models for NLP Short Paper

You can open the pre-recorded video in a separate window.

Abstract:

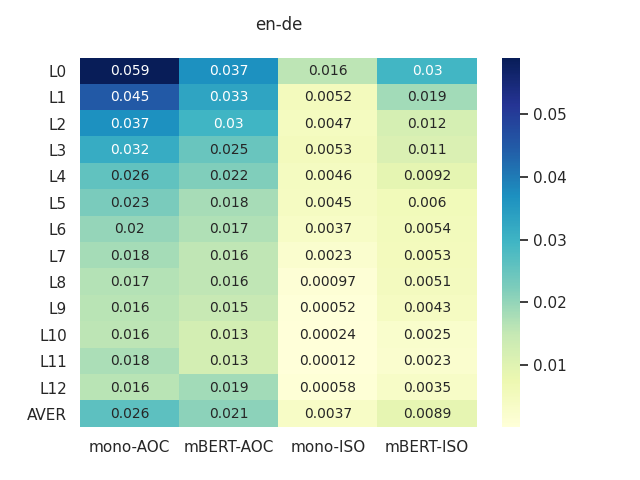

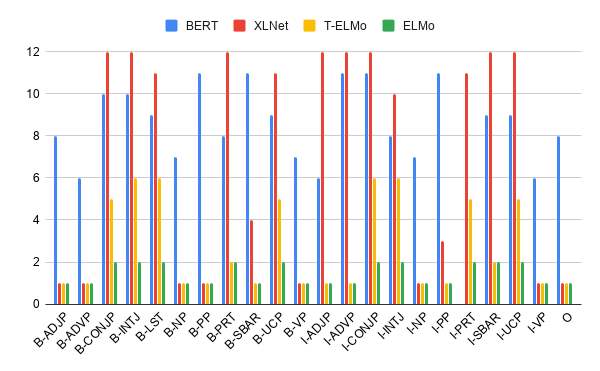

While behaviors of pretrained language models (LMs) have been thoroughly examined, what happened during pretraining is rarely studied. We thus investigate the developmental process from a set of randomly initialized parameters to a totipotent language model, which we refer to as the \textit{embryology} of a pretrained language model. Our results show that ALBERT learns to reconstruct and predict tokens of different parts of speech (POS) in different learning speeds during pretraining. We also find that linguistic knowledge and world knowledge do not generally improve as pretraining proceeds, nor do downstream tasks' performance. These findings suggest that knowledge of a pretrained model varies during pretraining, and having more pretrain steps does not necessarily provide a model with more comprehensive knowledge. We provide source codes and pretrained models to reproduce our results at \url{https://github.com/d223302/albert-embryology}.

NOTE: Video may display a random order of authors.

Correct author list is at the top of this page.

Connected Papers in EMNLP2020

Similar Papers

oLMpics - On what Language Model Pre-training Captures

Alon Talmor, Yanai Elazar, Yoav Goldberg, Jonathan Berant,

Probing Pretrained Language Models for Lexical Semantics

Ivan Vulić, Edoardo Maria Ponti, Robert Litschko, Goran Glavaš, Anna Korhonen,

Analyzing Individual Neurons in Pre-trained Language Models

Nadir Durrani, Hassan Sajjad, Fahim Dalvi, Yonatan Belinkov,

Reusing a Pretrained Language Model on Languages with Limited Corpora for Unsupervised NMT

Alexandra Chronopoulou, Dario Stojanovski, Alexander Fraser,