Leveraging Declarative Knowledge in Text and First-Order Logic for Fine-Grained Propaganda Detection

Ruize Wang, Duyu Tang, Nan Duan, Wanjun Zhong, Zhongyu Wei, Xuanjing Huang, Daxin Jiang, Ming Zhou

Semantics: Sentence-level Semantics, Textual Inference and Other areas Long Paper

You can open the pre-recorded video in a separate window.

Abstract:

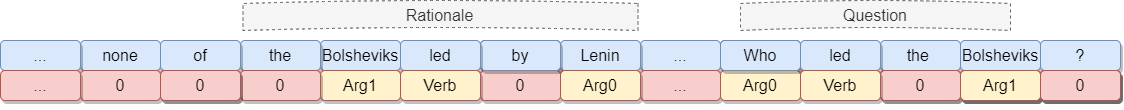

We study the detection of propagandistic text fragments in news articles. Instead of merely learning from input-output datapoints in training data, we introduce an approach to inject declarative knowledge of fine-grained propaganda techniques. Specifically, we leverage the declarative knowledge expressed in both first-order logic and natural language. The former refers to the logical consistency between coarse- and fine-grained predictions, which is used to regularize the training process with propositional Boolean expressions. The latter refers to the literal definition of each propaganda technique, which is utilized to get class representations for regularizing the model parameters. We conduct experiments on Propaganda Techniques Corpus, a large manually annotated dataset for fine-grained propaganda detection. Experiments show that our method achieves superior performance, demonstrating that leveraging declarative knowledge can help the model to make more accurate predictions.

NOTE: Video may display a random order of authors.

Correct author list is at the top of this page.

Connected Papers in EMNLP2020

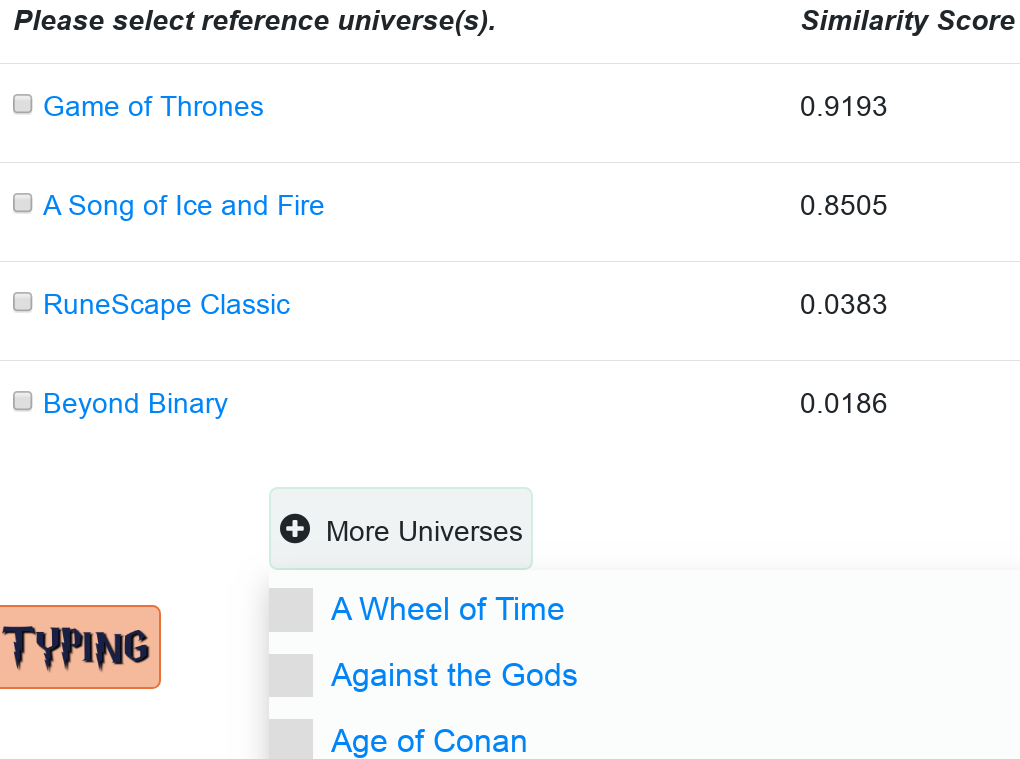

Similar Papers

ENTYFI: A System for Fine-grained Entity Typing in Fictional Texts

Cuong Xuan Chu, Simon Razniewski, Gerhard Weikum,

Detecting Fine-Grained Cross-Lingual Semantic Divergences without Supervision by Learning to Rank

Eleftheria Briakou, Marine Carpuat,

Compositional and Lexical Semantics in RoBERTa, BERT and DistilBERT: A Case Study on CoQA

Ieva Staliūnaitė, Ignacio Iacobacci,

Exposing Shallow Heuristics of Relation Extraction Models with Challenge Data

Shachar Rosenman, Alon Jacovi, Yoav Goldberg,