How Much Knowledge Can You Pack Into the Parameters of a Language Model?

Adam Roberts, Colin Raffel, Noam Shazeer

Question Answering Short Paper

You can open the pre-recorded video in a separate window.

Abstract:

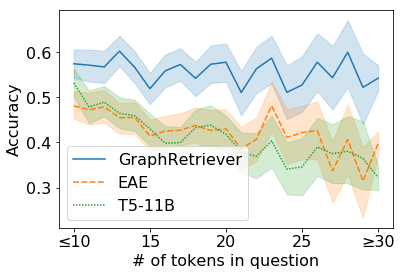

It has recently been observed that neural language models trained on unstructured text can implicitly store and retrieve knowledge using natural language queries. In this short paper, we measure the practical utility of this approach by fine-tuning pre-trained models to answer questions without access to any external context or knowledge. We show that this approach scales with model size and performs competitively with open-domain systems that explicitly retrieve answers from an external knowledge source when answering questions. To facilitate reproducibility and future work, we release our code and trained models.

NOTE: Video may display a random order of authors.

Correct author list is at the top of this page.

Connected Papers in EMNLP2020

Similar Papers

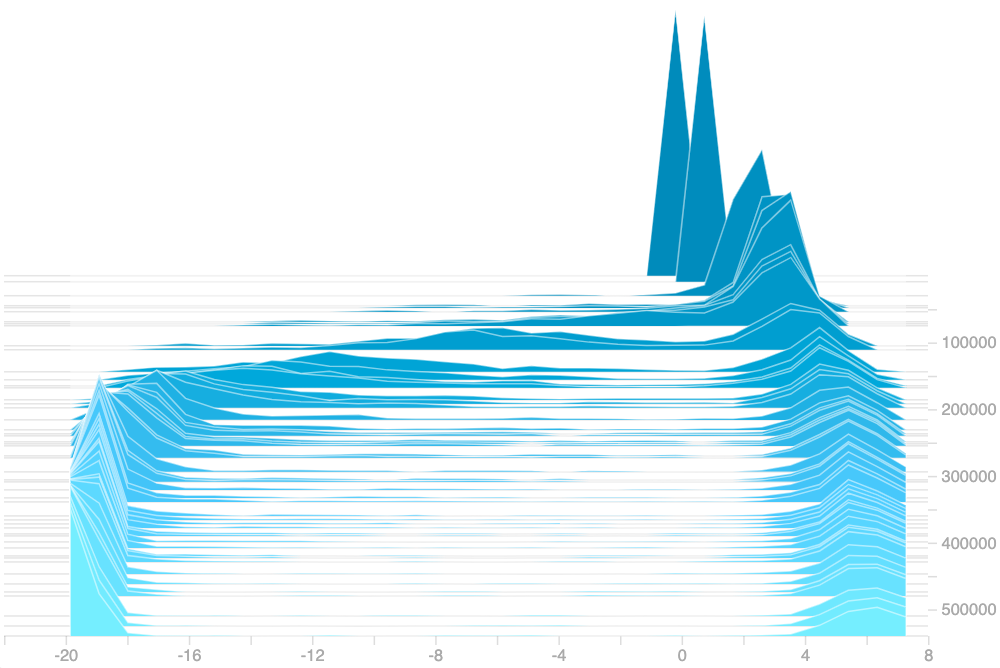

Improving Neural Topic Models using Knowledge Distillation

Alexander Miserlis Hoyle, Pranav Goel, Philip Resnik,

Learning from Context or Names? An Empirical Study on Neural Relation Extraction

Hao Peng, Tianyu Gao, Xu Han, Yankai Lin, Peng Li, Zhiyuan Liu, Maosong Sun, Jie Zhou,

Entities as Experts: Sparse Memory Access with Entity Supervision

Thibault Févry, Livio Baldini Soares, Nicholas FitzGerald, Eunsol Choi, Tom Kwiatkowski,