Local Additivity Based Data Augmentation for Semi-supervised NER

Jiaao Chen, Zhenghui Wang, Ran Tian, Zichao Yang, Diyi Yang

Machine Learning for NLP Long Paper

You can open the pre-recorded video in a separate window.

Abstract:

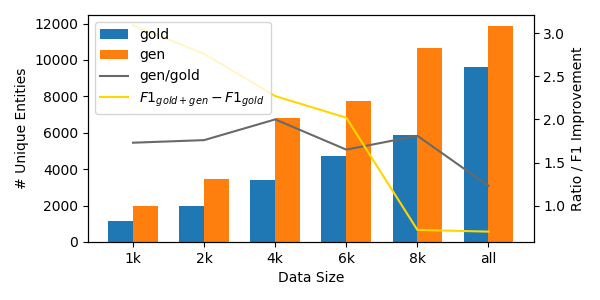

Named Entity Recognition (NER) is one of the first stages in deep language understanding yet current NER models heavily rely on human-annotated data. In this work, to alleviate the dependence on labeled data, we propose a Local Additivity based Data Augmentation (LADA) method for semi-supervised NER, in which we create virtual samples by interpolating sequences close to each other. Our approach has two variations: Intra-LADA and Inter-LADA, where Intra-LADA performs interpolations among tokens within one sentence, and Inter-LADA samples different sentences to interpolate. Through linear additions between sampled training data, LADA creates an infinite amount of labeled data and improves both entity and context learning. We further extend LADA to the semi-supervised setting by designing a novel consistency loss for unlabeled data. Experiments conducted on two NER benchmarks demonstrate the effectiveness of our methods over several strong baselines. We have publicly released our code at https://github.com/GT-SALT/LADA

NOTE: Video may display a random order of authors.

Correct author list is at the top of this page.

Connected Papers in EMNLP2020

Similar Papers

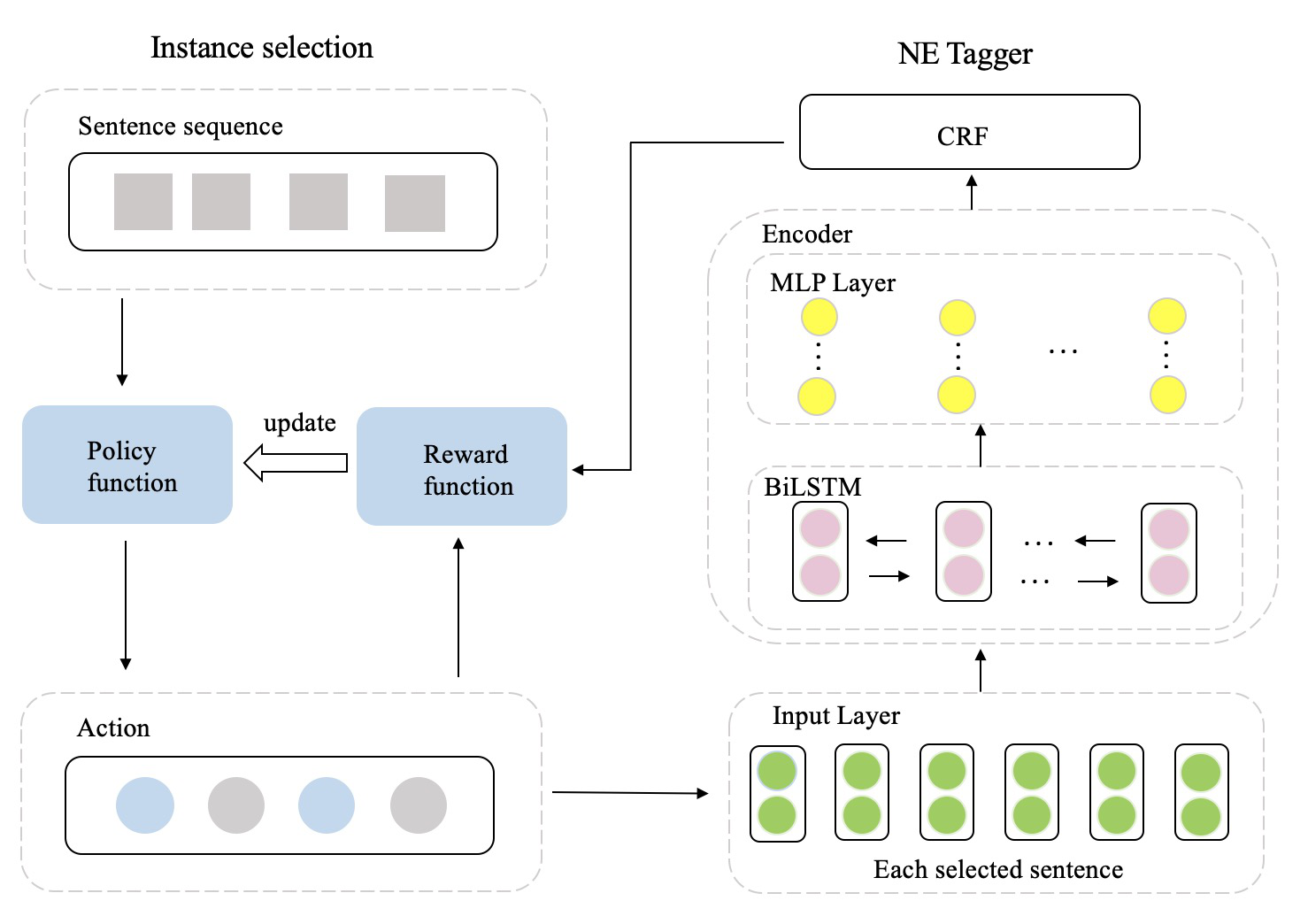

DAGA: Data Augmentation with a Generation Approach forLow-resource Tagging Tasks

Bosheng Ding, Linlin Liu, Lidong Bing, Canasai Kruengkrai, Thien Hai Nguyen, Shafiq Joty, Luo Si, Chunyan Miao,

Adversarial Self-Supervised Data-Free Distillation for Text Classification

Xinyin Ma, Yongliang Shen, Gongfan Fang, Chen Chen, Chenghao Jia, Weiming Lu,

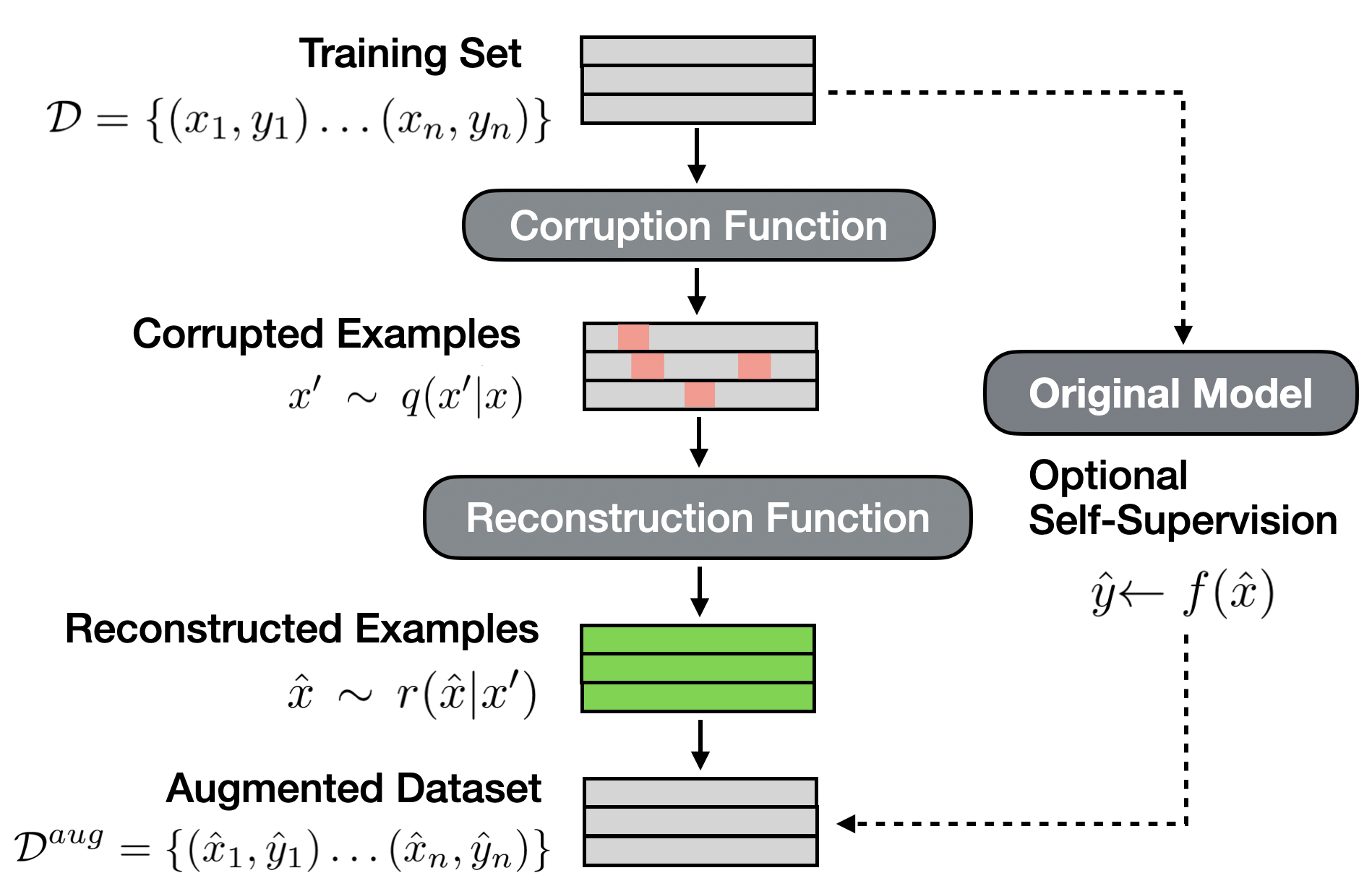

SSMBA: Self-Supervised Manifold Based Data Augmentation for Improving Out-of-Domain Robustness

Nathan Ng, Kyunghyun Cho, Marzyeh Ghassemi,