Pre-tokenization of Multi-word Expressions in Cross-lingual Word Embeddings

Naoki Otani, Satoru Ozaki, Xingyuan Zhao, Yucen Li, Micaelah St Johns, Lori Levin

Machine Translation and Multilinguality Long Paper

You can open the pre-recorded video in a separate window.

Abstract:

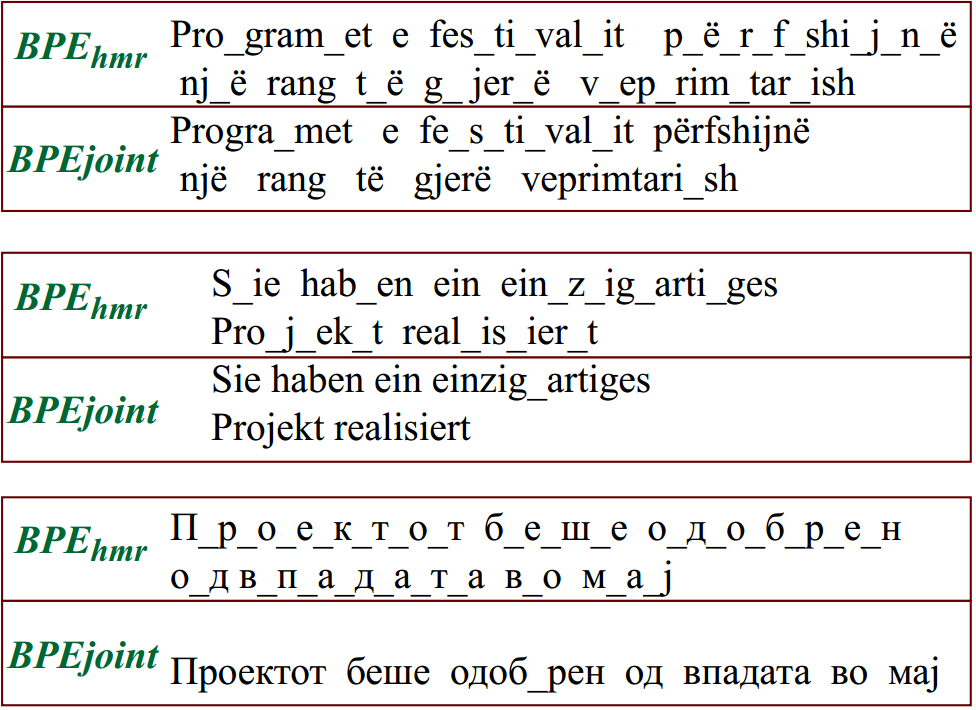

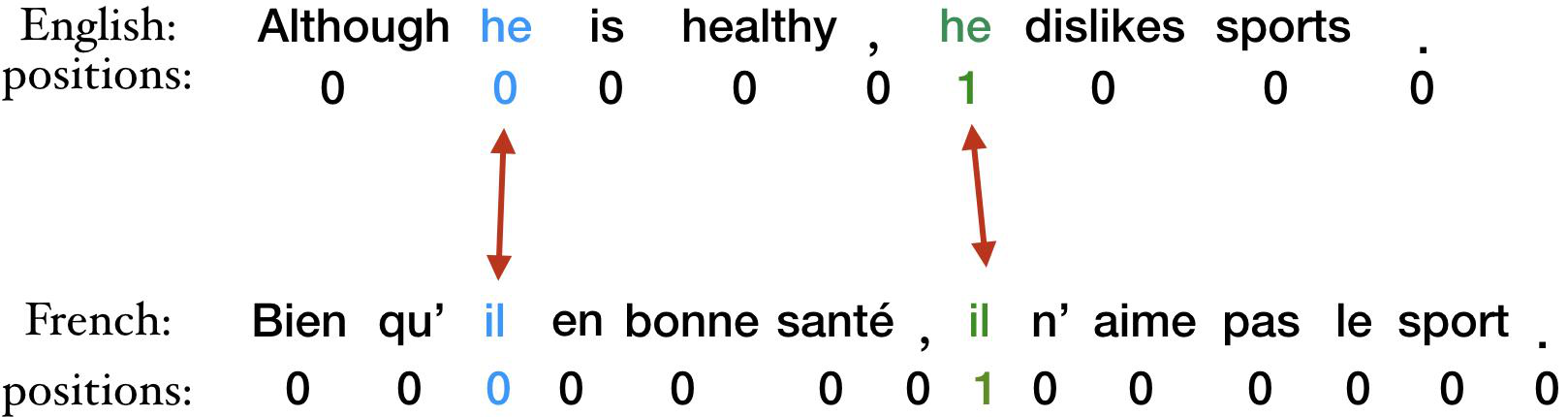

Cross-lingual word embedding (CWE) algorithms represent words in multiple languages in a unified vector space. Multi-Word Expressions (MWE) are common in every language. When training word embeddings, each component word of an MWE gets its own separate embedding, and thus, MWEs are not translated by CWEs. We propose a simple method for word translation of MWEs to and from English in ten languages: we first compile lists of MWEs in each language and then tokenize the MWEs as single tokens before training word embeddings. CWEs are trained on a word-translation task using the dictionaries that only contain single words. In order to evaluate MWE translation, we created bilingual word lists from multilingual WordNet that include single-token words and MWEs, and most importantly, include MWEs that correspond to single words in another language. We release these dictionaries to the research community. We show that the pre-tokenization of MWEs as single tokens performs better than averaging the embeddings of the individual tokens of the MWE. We can translate MWEs at a top-10 precision of 30-60%. The tokenization of MWEs makes the occurrences of single words in a training corpus more sparse, but we show that it does not pose negative impacts on single-word translations.

NOTE: Video may display a random order of authors.

Correct author list is at the top of this page.

Connected Papers in EMNLP2020

Similar Papers

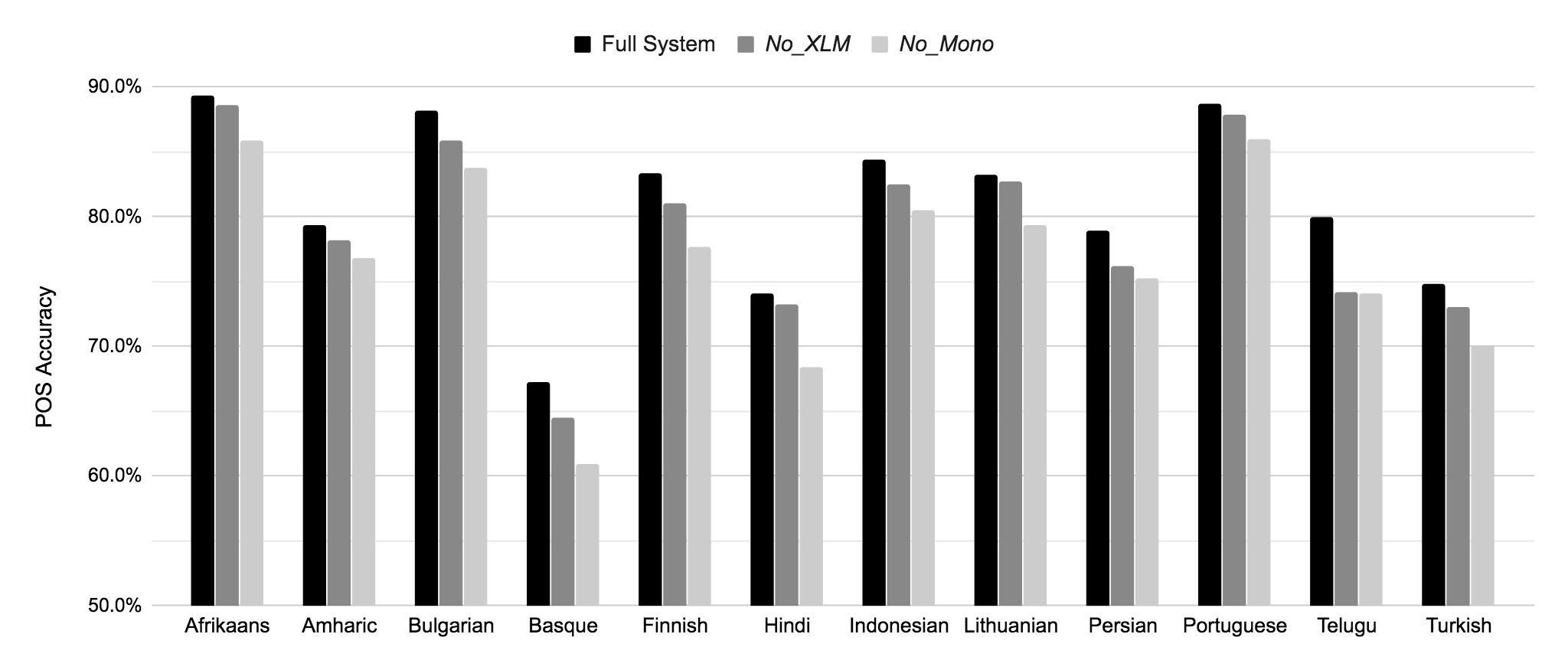

Unsupervised Cross-Lingual Part-of-Speech Tagging for Truly Low-Resource Scenarios

Ramy Eskander, Smaranda Muresan, Michael Collins,

Don't Neglect the Obvious: On the Role of Unambiguous Words in Word Sense Disambiguation

Daniel Loureiro, Jose Camacho-Collados,

Reusing a Pretrained Language Model on Languages with Limited Corpora for Unsupervised NMT

Alexandra Chronopoulou, Dario Stojanovski, Alexander Fraser,