What do Models Learn from Question Answering Datasets?

Priyanka Sen, Amir Saffari

Question Answering Long Paper

You can open the pre-recorded video in a separate window.

Abstract:

While models have reached superhuman performance on popular question answering (QA) datasets such as SQuAD, they have yet to outperform humans on the task of question answering itself. In this paper, we investigate if models are learning reading comprehension from QA datasets by evaluating BERT-based models across five datasets. We evaluate models on their generalizability to out-of-domain examples, responses to missing or incorrect data, and ability to handle question variations. We find that no single dataset is robust to all of our experiments and identify shortcomings in both datasets and evaluation methods. Following our analysis, we make recommendations for building future QA datasets that better evaluate the task of question answering through reading comprehension. We also release code to convert QA datasets to a shared format for easier experimentation at https://github.com/amazon-research/qa-dataset-converter

NOTE: Video may display a random order of authors.

Correct author list is at the top of this page.

Connected Papers in EMNLP2020

Similar Papers

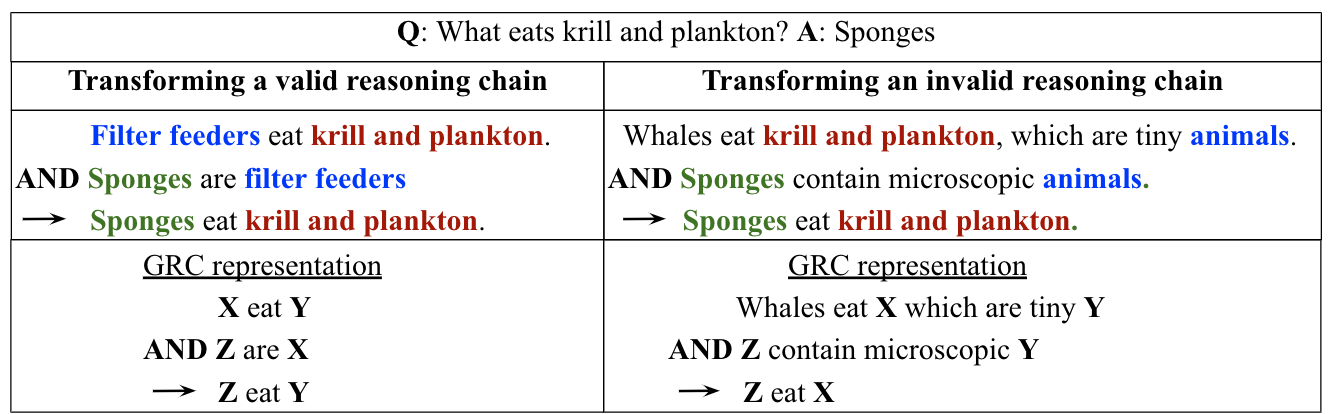

Learning to Explain: Datasets and Models for Identifying Valid Reasoning Chains in Multihop Question-Answering

Harsh Jhamtani, Peter Clark,

What Does My QA Model Know? Devising Controlled Probes using Expert

Kyle Richardson, Ashish Sabharwal,

Unsupervised Question Decomposition for Question Answering

Ethan Perez, Patrick Lewis, Wen-tau Yih, Kyunghyun Cho, Douwe Kiela,

MOCHA: A Dataset for Training and Evaluating Generative Reading Comprehension Metrics

Anthony Chen, Gabriel Stanovsky, Sameer Singh, Matt Gardner,